June 23, 2014

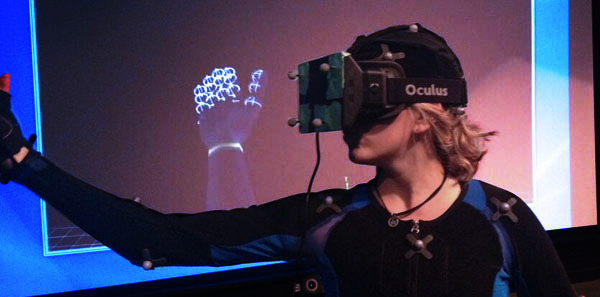

vIVE – Very Immersive Virtual Experience (Virtual Reality and MOCAP)

Written by Denise Quesnel

vIVE – Very Immersive Virtual Experience at S3D Centre

The initial goal of this project was to use passive optical motion capture to track an Oculus Rift to create an immersive virtual experience. The physical Oculus Rift is motion captured and in realtime with low latency the data is piped to unity to drive the virtual Oculus Rift. The output is wirelessly transmitted to the device. This results in an untethered virtual experience, which can include hands, feed or even a full body avatar.

The future goals for this project is:

- Develop opensource tools to create an immersive virtual experience

- Develop or adopt tools and protocols to allow virtual spaces to overlap

- Using observation and experimentation; explore new user experiences, interactivity and UI/UX language and protocols

The initial system was built using the following hardware and software:

- 40 Camera Vicon Motion Capture for realtime mocap

- Custom data translation code to facilitate communication between Vicon and Unity

- Unity Pro for realtime rendering

- Autodesk Maya for scene creation

- Dell workstations and NVidia Graphics Cards for data processing and rendering

- Paralinx Arrow for wireless HDMI (untethered operator)

The system has been extended to support:

- Naturalpoint (Motive) capture system

See the entirety of the project at S3D Centre’s vIVE project page.